# DAHN -- Human Experience of the MAP

To unlock the power of the MAP, human agents need a way to visualize and interact with the information it manages and the actions it offers. In conventional applications, this capability would be offered by the application's *User Interface* (UI). For a variety of reasons, however, the conventional UI approach falls short of MAP goals.

### Critique of Current User Interfaces

* ***Application-centric vs. Human-Centric***. Conventional *user interfaces* are designed around providing effective access to an *application.* If I, as a human user, need to interact with multiple applications, I am faced with a fragmented experience. The MAP flips this around. Instead of asking, what is the most effective way to interace with this application, the MAP asks, what is the full range of capabilities a human agent requires access to and how can we optimize *their* experience *across* *all* of those applications?

* ***User Interface (UI) vs. Human Interface (HI)***. The application-centric nature of conventional *user interfaces* is reflected in their very terminology. By referrring to human agents as *users*, they reduce people to the limited role they play with respect to that application. But humans are complex, multi-dimensional beings with rich, highly textured lives. In keeping with the principles of [#primacy-of-the-individual](https://evomimic.gitbook.io/map-book/core-principles-of-the-map#primacy-of-the-individual "mention")and [#technology-serves-life](https://evomimic.gitbook.io/map-book/core-principles-of-the-map#technology-serves-life "mention"), the MAP favors the terms *human experience* (HX) and *human interface* (HI) over the more conventional terms *user experience* (UX) and *user interface* (UI). The MAP aims to foster the fullest expression of the creative potential of every person and to catalyze the self-organizing relationships of humans to each other. Choosing the term *human* reminds and even challenges MAP designers to engage with people on multiple levels beyond the simply utilitarian notion of *user* -- emotionally, creatively, socially, and spiritually.

> Only drug dealers and software companies call their customers 'users'

>

> -Edward Tufte

* ***Organization-centric vs. Human-Centric***. Conventional applications are built by and for ***organizations***. Their user interfaces aim for consistency in *look and feel* across the interfaces of the *organization*, not consistency across the experience of a *human agent*. The choice of look and feel is made by the organization to serve *its* aims. This approach creates an inherent ***power asymmetry***. The power the organization wields over my experience dwarfs my power over that experience. And the level of investment large organizations can make in their user interfaces dwarfs the investment small organizations can make. Compare the power of Amazon's user interface vs. the UI for your local bookstore's website. This organizational asymmetry fuels the *rich-get-richer*, *success-to-the-succesful* dynamic (a *reinforcing feedback loop* in the terminology of *systems theory*) that drives polarization. The MAP is created for the ***benefit of empowered***** human *****agents***. The MAP intends to allow *human agents* to select the look and feel that serves *their* individual aims, *across* applications (and organizations).

* ***Closed vs. Open Information Spaces.*** Conventional user interfaces are built around the defined set of objects managed by their application. In other words, at UI design time, they know the types of information (i.e., the database schema) of the application and craft the user experience accordingly. In that sense, the design space is *closed*. Introducing a new type of information -- i.e., extending the database schema -- requires a change to the user interface. In contrast, the MAP intends to enable self-organizing human agents to continuously introduce new types of information and actions into the MAP. And the MAP also empowers human interface developers to (independently) introduce new visualizers to the MAP. The MAP aims to be ***open-ended*** in both the information and capabilities it offers and in the ways people can experience these capabilities.

* ***Push vs. Pull***. The user experience of conventional applications is intended to push products, services, ideas, feelings, etc. onto human agents. The MAP aims to help create ***pull-oriented interfaces*** in alignment with its [#pull-rather-than-push](https://evomimic.gitbook.io/map-book/core-principles-of-the-map#pull-rather-than-push "mention")principle.

The effects of these differences are profound. The MAP's *human-centric*, *open-ended, pull-oriented interface* allows me to discover, connect with, and engage in reciprocal value flows with a diverse range of individuals and organizations within a *unified* experience whose look and feel *I* have selected in service to *my* goals. Contrast that with the *fragmented* experience I have when interacting with a diverse range of organizations offering *organization-centric*, *push-oriented* interfaces, each buffeting me with highly sophisticated techniques aimed at persuading me to stay engaged with *their* site and to subscribe to whatever services, products, ideas, and/or feelings *they* are pushing in order to serve *their* organization's goals.

Furthermore, the human experience of the MAP extends beyond the people who use the MAP to guide their own personal and collective journeys, to include those who contribute to the evolution of the MAP human experience itself. HX designers, HI developers, artists, and others can contribute their creative endeavors to the open-ended unfolding of the MAP experience. To see how this is possible, we must first greet the new DAHN.

## Greet the New DAHN

DAHN -- the *Dynamic, Adpative, Holon Navigator* -- is what makes the power of the MAP available to human agents. It provides a human interface (HI) enabling a human agent to navigate the MAP via a standard web browser.

### DAHN is a Holon Navigator

Unpacking the DAHN name, DAHN is referred to as a ***holon navigator*** because its underlying metaphor is ***empowered agents navigating a multi-dimensional graph***, where the nodes of the graph are *self-describing, active holons* of any type (e.g., *agents*, *memes*, *resources*, *services* and their sub-types) and the links are *holon relationships*. The self-describing nature of MAP holons means that when you visit a node you can discover its *holon type* and from that discover the *set of properties* that node has, the *set of actions* that node can perform, and the *set of relationships* that can be traversed from that node.

As one moves through the *MAP information graph*, there are choices to be made. For example, I can choose which of a node's actions I want to invoke and/or choose which of its relationships I want to navigate. Since most relationships are 1-to-many, the result of navigating a relationship is often a *collection of holons*. When presented with a collection, I may choose to *filter* that collection down to a subset of holons I care about. Note that regardless of whether the node is a person, a meme, an enterprise, a book, a community, a service, or any other holon, navigating the graph is possible with a very small number of primitive actions -- *visiting* a node, *invoking actions* (including editing holon properties and adding/removing related nodes), *traversing* relationships, and *filtering* collections.

Just as there are choices to be made in navigating an *information grap*h, presenting information to human agents involves a cascading set of ***presentation choices*** regarding layout, themes, language and cultural conventions (i.e., internationalization/ localization), widget selection and configuration, and more.

DAHN decouples *information navigation* *choices* from *presentation choices*. This separation of *information* from its *presentation* is powerful because it allows the choices involved in *navigating* the information graph to be decoupled from the choices involved in deciding how to *visualize* the information graph. The same *information graph* can be visualized a variety of different ways.

The *navigation choices* for any journey through the MAP can be expressed as a *graph expression* that can be saved for subsequent replay. In the real-world, when we repeat the itinerary of a previous trip, we will typically discover that things have changed since the last time we were there. Often we experience a strange mix of the familiar and the unusal. Similarly, when re-playing a DAHN saved view, we may well discover that things have changed since the last time we were there.

DAHN allows the way people visualize and interact with the MAP to continuously evolve without depending upon a centralized designer (or centralized team of designers). In keeping with the spirit of the rest of the MAP, DAHN is, itself, self-organizing. It empowers people to personalize their own experience and, in so doing, contributes to the crowd-sourced emergent design. Thus, the default visualization that is available for literally everything in the MAP is continuously evolving and reflects the collective contributions of prior MAP explorers. These explorers include HI developers who may create highly specific visualizations they have seeded into DAHN's evolutionary process. The ability to shape your experience in highly personal ways is available to every MAP explorer. And, in doing so, they are indirectly evolving the collective experience.

DAHN's design concept is introduced in the following figure:

DAHN dynamically generates a human interface (HI) within a standard web browser that enables human agents to navigate the *memes*, *agents*, *services* and *resources* of the MAP. DAHN includes an extensible set of type-specific ***Visualizers*** that are contributed by *Human Interface (HI) Developers*. *Visualizers* are software resources that both present and allow interaction with the MAP's ***self-describing holons***. DAHN's ***selector function*** selects the visualizers to present, continuously ***adapting*** to the evolving landscape of holon types and visualizers, as well as individual and crowd-sourced agent preferences and various environmental factors. DAHN's ***interface generator*** uses graph queries to extract desired subsets of the MAP information graph and marshalls that data into the ***visualizers*** selected by the DAHN *selector function* in order to generate the human interface.

DAHN aims to present reasonable default visualizations for everything in the MAP, while allowing (but not requiring) any HI development effort. In alignment with the [#primacy-of-the-individual](https://evomimic.gitbook.io/map-book/core-principles-of-the-map#primacy-of-the-individual "mention") principle, DAHN also gives human agents considerable control over their experience.

### DAHN is Dynamic

When and by whom presentation decisions are made can vary. In conventional applications, most of the presentation choices are made by a human experience (HX) designer at *design-time* (i.e., prior to or during the implementation of the human interface) based on their knowledge of the specific information space to which they are aiming to provide access. These can be characterized as ***static choices*** -- they are fixed by the *HX Designer*.

***By contrast, DAHN is heavily skewed towards deferring HX choices to run-time (i.e., DAHN is dynamic).*** This follows directly from the open-ended nature of the MAP. At design-time, we don't know what subjects we will encounter. The design of DAHN is informed by the desire to favor the *generative complexity* of living systems over the *designed complication* of human engineered systems. Thus, just as we have seen with the small set of basic elements comprising the [core layers of the MAP ontology](https://evomimic.gitbook.io/map-book/map-foundations#layer-1-memes-resources-agents-and-services), our challenge is to identify a minimal set of presentation elements from which we can generate an open-ended, compelling human experience of the MAP.

In response to this challenge, DAHN's fundamental presentation element is a ***visualizer***. *Visualizers* both present and allow interaction with the MAP's *self-describing holons*. Some visualizers are provided by the core DAHN platform, others are contributed by *Human Interface (HI) Developers* via [*DAHN Commons* or *DAHN Markets*](https://evomimic.gitbook.io/map-book/dahn-human-experience-of-the-map/dahn-commons-and-markets).

Visualizers come in the following flavors:

* ***Canvas Visualizers*** -- present the outermost visual container for a *DAHN view*. All other DAHN elements are presented within the boundaries of this container. DAHN provides at least one standard canvas visualizer (DAHN-2D Canvas). Each *DAHN view* is rooted in a single *canvas visualizer*, selected by the human agent. The *canvas visualizer* offers some top-level *actions,* a set of CSS styles that define the theme (look and feel) for that *view* and a dynamically evolving set of child *node visualizers*, *graph visualizers* and *collection visualizers*.

* ***Action Visualizers*** -- present and allow invocation of the *actions* offered by any of the other visualizers or of the holons themselves (e.g., via buttons and menus).

* ***Node*** ***Visualizers*** -- present and allow interaction with various aspects of an individual holon (i.e., a meme, resource, agent or service). *Node visualizers* are type-specific. By default, DAHN includes a *Holon Node Visualizer* capable of presenting *any* type of holon*.* Additional type-specific *node visualizers* can be contributed by HI developers. The choice of node visualizer used for any given type of holon within a *view* is determined by the *DAHN selector function*. Each *node visualizer* within a *view* can have one or more *property visualizers*.

* ***Property Visualizers*** -- present and allow interaction with a specific type of holon property. DAHN provides a set of default property visualizers for a range of property types (e.g., string, number, date, enumerated value, measured quantity, image, sound, video, etc.). Over time the set of property visualizers will be extended by the DAHN core team and other HI developers. The choice of *property visualizer* used for any given type of *holon property* within a *view* is determined by the *DAHN selector function*.

* ***Graph Visualizers*** -- present interconnections between the holons and holon collections that comprise the *information graph* associated with a *view*. Graph visualizers provide both the visual representation of the graph as nodes and lines and also provide human agents the ability to define the filters that determine what holons and relationships are included in that filtered graph. The set of filtering rules used to generate this graph can be persisted as part of the *saved view*. *Graph visualizers* can present (top-level) graphs within the *canvas viewing area* or within the *node viewing area* of a *node visualizer*.

* ***Collection Visualizers*** -- represents a collection of like objects (e.g., a list of agents or a list of memes). *Collection visualizers* are holon type-specific. By default, DAHN includes a *Holon Collection Visualizer* capable of presenting any type of holon collection*.* This visualizer offers a choice of collection layouts (table, grid, geographic map, etc.). Additional type-specific *node visualizers* can be contributed to the DAHN P-Space by HI developers. The choice of collection visualizer used for any given type of holon collection within a *view* is determined by the *DAHN selector function*. Collection visualizers provide human agents the ability to define filters applied to the collection. These filters are persisted as part of the *saved view*.

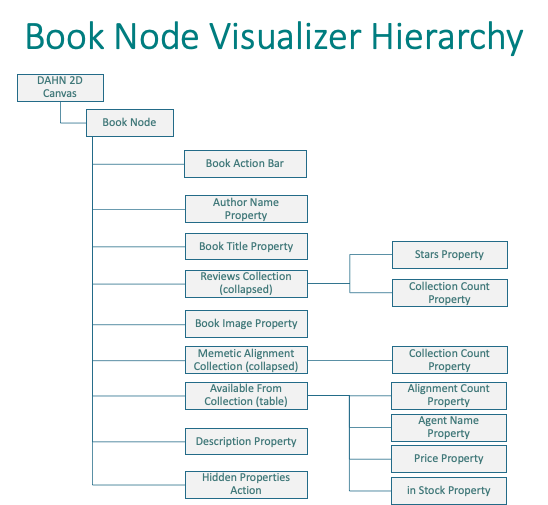

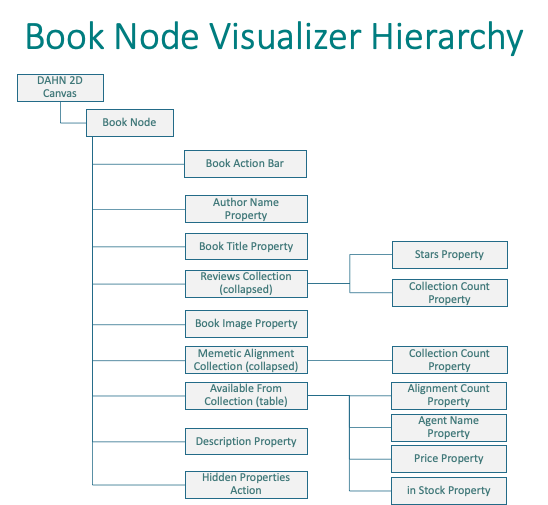

Structurally, visualizers are organized at run-time into a ***visualizer usage hierarchy*** that follows the modular principles of [atomic design](https://atomicdesign.bradfrost.com) (in spirit, if not in terminology). The root of the hierarchy is a usage of the selected *canvas visualizer*. As the human agent explores the MAP, visiting different types of nodes and their properties, navigating different relationships, filtering collections, etc. the *DAHN Selector Function* makes choices about which visualizer to use and adds a usage for each selected visualizer to the hierarchy. The following figure shows the subset of this hierarchy related to a *Book Node visualizer.*

Given the open-ended nature of DAHN and the ability for HI developers to contribute different visualizers, ***it is possible for multiple alternate visualizers to exist at every level of this hierarchy***. For example, the standard *Holon Node Visualizer* is available as an alternative to the *Book Node visualizer*. Putting aside for the moment the question of how the *DAHN Selector Function* selects a visualizer from among several alternative possible visualizers, what if the human agent would like to make a different choice?

Each visualizer includes a *personalization control* (). ***Dragging*** a visualizer's *personalization control* changes the display order and visibility of that visualizer. ***Clicking*** on a *personalization control* allows the human agent to see if there are any alternative visualizers and, if so, to select one.

Selecting a different visualizer will cause the display to be re-rendered using the selected visualizer. Since alternate visualizer selection can happen at any level of the visualizer hierarchy, human agents are empowered with significant control over their experience.

Of course, this control comes at a cost. Tweaking personalization controls takes time and cognitive effort -- time and effort that could be better spent exploring the MAP content, offering and invoking services, discovering or creating new memes, etc.

DAHN helps minimize this cost in two significant ways:

1. ***By "remembering" an agent's personalization choices*** so that when they employ visualizer types they have previously used, any previously set personalizations can be applied. This can happen at all levels of the visualization hierarchy and for all holon types, collection types and property types.

2. ***By optimizing the default experience to reduce the need for individual agents to invoke personalizations to get an effective experience*** -- even in regions of the MAP they have never previously visited. This is accomplished by leveraging the collective personalization actions of MAP explorers who have previously visited that region of the MAP. This is perhaps the most novel (and the most powerful) aspect of the DAHN design and is the focus of the next section.

### DAHN is Adaptive

> Living systems, from a single cell to multi-cellular organisms and populations of interacting species, all have the amazing ability to adapt to changes in their environments. Adaptation allows living systems to adjust themselves in response to persistent changes of the environment.

>

> [Adaptation of Living Systems](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6060625/), Tu and Rappel, [National Center for Biotechnology Information](https://www.ncbi.nlm.nih.gov), 2017

The MAP is continuously evolving as *human* *agents* put themselves on the MAP, discover and connect with other agents into self-organizing *We-Spaces*, introduce and refine *memes*, offer *services* and the *software agents* that implement them, and engage in reciprocal value flows with other agents by accepting *service* *offers* and invoking those *services*. The ecosystem of MAP developers and thought leaders can introduce new types of holons, while Human Interface (HI) developers can introduce new DAHN visualizers.

This evolving swirl of changes unfolds in a decentralized way -- i.e., via empowered agents making their own contributions at their own pace. This is in dramatic contrast to the conventional application development model where a central organization carefully orchestrates the roll out of new features (and the human interface for interacting with them).

The motivation behind DAHN's adaptive nature is to provide the ability for it to continuously adapt the human experience of the MAP to these evolutionary changes (and also help agents contribute to these evolutionary changes) without the need for a centralized HI development process.

DAHN's adaptive capabilities both depend upon and build upon DAHN's dynamic capabilities. As noted above, DAHN *dynamically* makes a large number of decisions at run-time, i.e., at the point in time when the human interface is actually being generated.

DAHN is ***adaptive*** in the sense that each of these decisions are sensitive to a variety of contextual parameters. Over time, DAHN can make different decisions because the situation has changed.

Specifically, DAHN is adaptive in the following dimensions:

1. to different ***presentation device form factors*** by following the principles of [responsive design](https://en.wikipedia.org/wiki/Responsive_web_design). For example, layout, component sizes, visibility choices are sensitive to screen size and aspect ratios that vary widely across smart watches, smart phones, tables, laptops, and multi-monitor desktop layouts.

2. to ***language and cultural conventions*** by following the proven [internationalization](https://en.wikipedia.org/wiki/Internationalization_and_localization) (I18n) design patterns.

3. to different ***presentation*** ***themes*** (e.g., choices regarding colors, fonts, font sizes, button styles, etc. )

4. to the ***introduction and evolution of*** ***types of information*** being presented (e.g., new types of resources, agents, offers, memes, etc.)

5. to the ***introduction and evolution of visualizers***.

6. ***to individual agent preferences.*** In alignment with the principle of [#primacy-of-the-individual](https://evomimic.gitbook.io/map-book/core-principles-of-the-map#primacy-of-the-individual "mention"), DAHN grants substantial power for human agents to control their MAP experience.

7. ***to collective agent preferences.*** Individual agent preferences are fed back and aggregated within DAHN itself as a way of driving the crowd-sourced evolution of the MAP experience.

Dimensions 1 through 3 are adaptive features that most modern human interfaces support. But DAHN is believed to be novel in its support for dynamic and adaptive choices in dimensions 4 through 7.

Two concepts are leveraged across most of DAHN's adaptive features:

* ***salience*** -- what is of greater or lesser relative importance to me and

* ***affinity*** -- what things naturally belong together (*affinity group*) and a rank ordering of my personal affinity for something (*affinity score*)

For example, when choosing the *visibility* and *ordering* (top-to-bottom) of properties within a node visualizer's viewing area, it is possible to think of rank-ordering a holon type's properties based on their *salience*. Higher ranked properties are displayed nearer to the top. Properties that are below a *salience threshold* would not be displayed at all. The *salience threshold* could be responsive to the size of the viewing area so that more properties would displayed if the viewing area is larger and fewer if it is smaller.

Often there is a natural ***affinity*** between different properties (or actions). One clear example of this is with the various properties making up an address (street number, street name, unit or apartment number, city, state or province, country, postal code). This set of properties form what DAHN refers to as an ***affinity group***.

*Affinity* can also be used to express a preference for a range of choices. For example, *DAHN-2D Canvas* visualizer may support a number of presentation themes. In a given circumstance for a given human agent, DAHN selects the theme for which the canvas has the greatest *affinity score*.

But where do *salience* and *affinity* scores come from? Who gets to decide?

DAHN's answer is to leverage ***personalization actions*** and ***navigation actions*** to determine (and evolve!) *salience* and *affinity*. There is no separate "survey" where we ask human agents to rank order, say the the salience of properties within a node. Rather we interpret salience from their gesture of dragging a property higher or lower in the node's viewing area because they prefer to see it there. Similarly, when a human agent selects one visualizer over another, DAHN interprets that as them expressing a greater affinity for the selected visualizer and adjusts affinity scores accordingly. Generally speaking, the presentation and navigation gestures human agents naturally make as they shape their personal experience of the MAP are used by DAHN to infer personal salience and affinity scores.

**But DAHN also aggregates salience and affinity scores across all agents that use DAHN**. Thus, an individual agent that changes the display order of a property or action affects not only their own *salience scores*, they also affect the *aggregate salience scores*. As more and more agents use (and personalize) a *visualizer type*, its *aggregate salience* and *afffinity scores* reflect the collective actions of these agents -- **in effect, crowd-sourcing salience and affinity**.

{% hint style="info" %}

NOTE: the specific gestures used to interpret *salience* and *affinity* are specific to each visualizer and will be demonstrated in the sections devoted to each flavor of visualizer.

{% endhint %}

*Aggregate* *salience* and *affinity* scores can be leveraged to drive default DAHN behavior in a way that reflects the collective actions of prior agent interactions. For many agents, this may be all they require. But they always have the option to override the default behavior. And DAHN remembers their preference in the form of *personal* salience and affinity scores.

Note, however, that the next time this agent visits, say, the same type of holon or property, DAHN now has two factors to consider: (1) the agent's *personal salience score* and (2) the current value of the *aggregate salience score*.

One way to handle this would be to simply always use the agent's personal score. In other words, if an individual agent indicated a preference, always honor that preference. But doing so deprives the agent of the opportunity to have their experience improve as more visualizers are introduced, more properties are defined and populated, more relationships are populated, etc.

In effect there is a trade-off between ***predictablity*** (always present things this way) and ***novelty*** (expose me to new stuff and the evolution of the collective wisdom of other agents).

Instead of dictating the choice, DAHN could allow human agents to make their own decision about the relative weight that should be given to personal scores vs. collective scores. This is an example of an ***adaptive control***. A set of possible **a*****daptive controls*** is illustrated in the following diagram.

Collectively, these sliders can be adjusted to reflect the degree to which the agent is in *exploit* vs. *explore* modes. For tasks I do frequently, I can set up my personal preferences exactly the way I like them to perform that task efficiently. I don't want the experience changing out from underneath me (i.e., I want to *exploit* my prior selections), so I'll position the sliders to the left. But when I'm more interested in discovering what's new (i.e., in explore mode), I can move the sliders to the right for maximum novelty.

{% hint style="info" %}

NOTE: The controls shown are intended to illustrate a range of *possible* adaptive dimensions. Whether these or other dimensions prove most effective in practice will be determined via our implementation experience.

{% endhint %}

The effect of the **Personal vs Collective Weighting Slider** is to change the relative **weighting** of *personal* and *aggregate* *scores*. Moving the slider all the way to the left, "pins" my personal preferences so that personal scores (where they exist) *always* take precedence. Moving the slider all the way to the right would give very strong weight to the aggregate scores. In this case, my personal preferences become temporary.

The ***Trending Slider*** applies only to *aggregate scores*. Consider the selection of a particular visualizer from among several choices. A simple count of the number of human agents that had opted for each choice would give a rank-ordering based on overall popularity. However, always selecting based on overall popularity could make it difficult for new (and presumably, improved) visualizers to gain traction. The concept of *trending* could be leveraged to allow selection based on recent aggregate preferences. Positioning the slider all the way to the left, gives weight to the all-time popular choice. Moving the slider to the right gives weight to shorter and shorter trending intervals. For example, to the visualizer that is most trending upwards over the past year, or month, or day, or hour.

{% hint style="info" %} Note to DAHN implementers: Refer to [**Implementing Real-Time Trending Topics with a Distributed Rolling Count** ](https://www.michael-noll.com/blog/2013/01/18/implementing-real-time-trending-topics-in-storm/)and/or [**How the Twitter Trending Algorithm works in 2022**](https://blog.hootsuite.com/twitter-algorithm/) for ideas on trending algorithms.

{% endhint %}

The ***Maturity Slider*** governs visualizer selection based on the ***release maturity*** of the different visualizers. Generally speaking, software that has been in production for a long time is more stable (i.e., has fewer bugs) than more recently released software. But such software also does not offer the latest features. If I am in *explore* *mode*, I may want check out visualizers that are in alpha or beta release. If I am in *exploit mode*, I may prefer visualizers have been around for multiple releases.

The ***Randomness Slider*** introduces a degree of randomness affecting all of the other dimensions. For example, even if I have positioned my *Maturity Slider* to favor only mature visualizers, the *DAHN Selector Function* may randomly select less mature visualizers. Positioning the randomness slider to the left reduces the overall randomness in selections. Moving it to the right increase the range of random effects.

**Copyright (c) 2022, this book is offered to the world under Creative Commons license** [**CC BY-NC-SA 4.0**](https://creativecommons.org/licenses/by-nc-sa/4.0/)